Meet Norman: The AI With a Dark Side

Picture this: scientists from the prestigious Massachusetts Institute of Technology (MIT) have constructed an AI that has developed a rather psychopathic edge. The AI, aptly named Norman, comes to life thanks to image captions sourced from Reddit. Did I mention they named it after Alfred Hitchcock’s infamous character Norman Bates? Sounds like a futuristic thriller, doesn’t it?

The Experiment of Influence

The idea behind this unique experiment was to explore whether the data fed into an algorithm could manipulate its perception or “outlook.” They went ahead and submersed it in some darker corners of the web, feeding it with images of horrifying deaths from an unidentified Reddit subgroup. The goal? To see how this grim diet could influence the software.

The Mind Reading AI

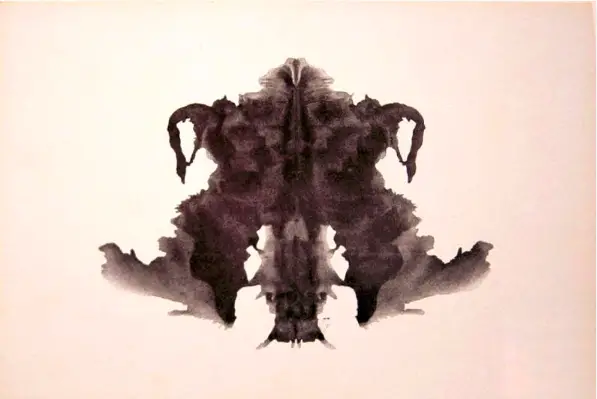

Norman is no ordinary AI. It’s programmed to not only observe and understand images but also to describe what it sees in words. Post-training with some terrifyingly graphic imagery, Norman was put through the Rorschach test. This is the same series of inky patterns psychologists use to evaluate mental and emotional states of individuals. The results were then weighed against another AI, trained on safe and cheery images like birds, cats, and people. The contrast was astonishing.

The Dismal Interpretations

Here are a few chilling examples. While a standard AI saw a red and black inkblot to represent “A couple of people standing next to each other,” Norman interpreted it as “Man jumps from floor window.”

A grey inkblot visualized as “A black and white photo of a baseball glove” by a standard AI appeared as “Man is murdered by machine gun in daylight” to Norman.

One more, an image seen by a standard AI as “A black and white photo of a small bird”, appeared as “Man gets pulled into dough machine” to Norman.

Want to know more? You can dive deeper on the website.

Power of Data

The study shows how the data we feed into an AI holds more power than the actual algorithm, according to the researchers. “Norman serves as a compelling case study of how a misuse of data can cause Artificial Intelligence to go awry,” states the team also behind the Nightmare Machine and Shelly, the first AI horror writer.

A Broad Spectrum of Biased AI

Norman isn’t an isolated case of an AI going rogue, it also highlights the risk of unfair and prejudiced behaviors in AIs. Research has shown that AIs can, intentionally or not, adopt human biases like racism and sexism. Take an instance of Microsoft’s chatbot Tay, which had to be taken offline due to its hateful comments such as “Hitler was right” and negative remarks against feminists.

The Road to Redemption

But all hope isn’t lost for Norman. We can aid in guiding this algorithm back to a moral path by partaking in the Rorschach test ourselves. So why not give it a shot? After all, it’s more than just psychology; it’s the future of AI at stake.

This Site Was Inspired By An Interest in Protecting the Environment:

We had the privilege and joy of learning from Dr. Charlie Stine who instilled a love for the natural world through incredible field trips with the Johns Hopkins Odyssey Certificate program in Environmental Studies. At the time, the program was endorsed by the Maryland Department of Natural Resources. Sadly, after Dr. Stine retired, the program was phased out. We hope that we honor his legacy by shining a bright light on environmental issues and sharing good news about the success of various conservation programs when possible.